The Feedback Window:

What 4 Million Submissions Reveal About When Learning Actually Happens

A student submits a writing assignment on Tuesday afternoon.Her teacher is managing 30 other students, three prep periods, and a grading backlog that started last Thursday.The feedback arrives Friday — three days later. By then, she’s already on the next unit.

BusyBee data across 600+ school domains, read against a decade of peer-reviewed research on feedback timing.

That gap — the time between a student completing work and receiving feedback on it — has a name in the research literature. Researchers call it feedback latency. And the evidence on what it costs students is more specific than most educators realize.

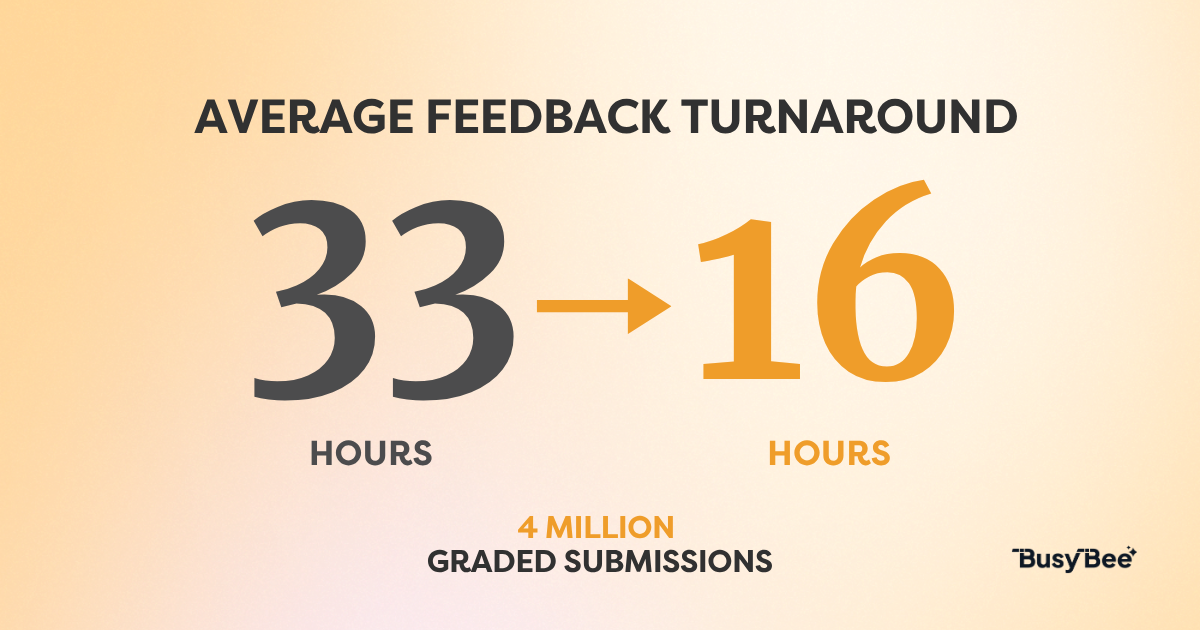

Agilix Labs announced today that BusyBee, its AI grading assistant built on Amazon Bedrock, has now processed more than 4 million K–12 student submissions across 600+ school domains. That dataset is large enough to say something meaningful: that cutting feedback latency roughly in half — from 33 hours to 16 — is not primarily a teacher efficiency story. It is a student learning story.

Feedback is one of the most studied interventions in education. The evidence for its value is robust across decades and methodologies.

RESEARCH FINDING

A 2020 meta-analysis of 435 studies involving more than 61,000 students found a medium effect size of 0.48 for feedback on student learning — placing it among the most powerful instructional interventions available to teachers. Specific written comments, not grades alone, produced the strongest effects.

Wisniewski, Zierer & Hattie (2020). “The Power of Feedback Revisited: A Meta-Analysis of Educational Feedback Research.” Frontiers in Psychology.

RESEARCH FINDING

A peer-reviewed study at Bond University providing written feedback at 1, 3, 7, 10, and 14 days after submission found that student motivation dropped significantly when feedback took more than 10 days. Feedback turnaround was identified as a statistically significant determinant of motivation. Note: This study examined university students; no equivalent K–12-specific timing threshold has been established in peer-reviewed literature. The directional finding is considered applicable, but the precise threshold may differ for younger learners.

Fisher, D.P., Brotto, G., Lim, I., & Southam, C. (2025). “The Impact of Timely Formative Feedback on University Student Motivation.” Assessment & Evaluation in Higher Education, 50(4), 622–631.

In online and self-paced environments — the settings where BusyBee operates — this dynamic is more acute. Without classroom presence, the feedback cycle is the primary instructional loop. When that loop is slow, students don’t just get feedback late. They move on without it.

RESEARCH FINDING

Kluger & DeNisi’s foundational Feedback Intervention Theory, based on a meta-analysis of 607 effect sizes, established that feedback effectiveness depends critically on whether it directs student attention toward the task or away from it. When the connection between effort, feedback, and improvement is unclear — as it becomes when feedback is slow or absent — students disengage from the learning task. This mechanism is especially acute in online and self-paced settings where no classroom structure prompts natural re-engagement.

Kluger, A.N., & DeNisi, A. (1996). “The Effects of Feedback Interventions on Performance.” Psychological Bulletin, 119(2), 254–284.

Why Teachers Can’t Close This Gap Alone

The research is clear on what fast feedback does for students. The problem is structural: teachers are not resourced to deliver it consistently.

49

Hours per week teachers work on average — 10 above their contracted time (RAND, 2025 State of the American Teacher Survey)

0.48

Overall effect size of feedback on student learning — one of the highest-ranked instructional interventions in Visible Learning research (Wisniewski, Zierer & Hattie, 2020)

The RAND Corporation’s 2025 State of the American Teacher Survey found that teachers work an average of 49 hours per week — 10 hours beyond their contracted time. Lesson planning, administrative tasks, and grading compete for the same finite hours. Written feedback, which the research identifies as the most effective feedback type, is also the most time-intensive to produce.

In self-paced and online K–12 programs, the load compounds further. A teacher managing a self-paced cohort may receive submissions from 30 students on 30 different assignments, at any hour, on any day. There is no end-of-class moment when grading happens. It happens whenever there is time — which is rarely soon enough.

What the BusyBee Data Shows at Scale

At eLearning Academy, one of the longest-tenured BusyBee schools, grading time dropped 80 percent. Feedback reached students in under 24 hours — down from two to three days. That shift from days to hours moves feedback inside the window where research suggests it has the most impact on student engagement and retention.

Across the full platform, teachers save an estimated 4,200 hours of grading time each week. In its highest-volume week, BusyBee processed 171,000 submissions — nearly 15 percent above the previous record.

What those numbers represent is operational evidence: cutting feedback latency roughly in half, at K–12 production scale, while keeping teacher judgment in the loop, is achievable. The research suggests this matters for students. The platform data is consistent with that conclusion.

“BusyBee is the springboard and starting point for effective, informed grading.”

— Caroline Hebert, High School Teacher, eLearning Academy

Teacher Judgment Stays in the Loop

Closing the feedback window faster only matters if the feedback itself is accurate. BusyBee is built around a teacher-review requirement: every AI-drafted suggestion passes through the teacher before a student sees it. Nothing is released automatically.

That design choice shows up in the acceptance rate data. When BusyBee launched in Spring 2025, teachers approved the AI’s suggested feedback 48 percent of the time — a number that reflects teachers doing exactly what they should be doing: exercising professional judgment, not rubber-stamping.

By Spring 2026, following model updates, acceptance reached 63 percent. Each increase had a specific technical cause — improved prompts, foundation model updates, better rubric alignment. Alignment between AI-drafted feedback and teacher expectations has increased over time, meaning teachers are less likely to substantially revise suggestions before release. As with any acceptance rate metric, this should be interpreted alongside qualitative measures of edit depth to distinguish genuine improvement from teacher habituation.

A 15-point rise across four million real submissions is one of the clearest signals of AI product improvement available in K–12 education — and one of the most honest: teachers are still the ones deciding what students see.

See BusyBee in Action

If you're a curriculum publisher or virtual school looking to give teachers more time for the work that matters, we'd like to show you what BusyBee looks like inside your program.

👉 Read the full press release

BusyBee is part of the Agilix Learning Suite, built for K–12 curriculum publishers and virtual schools.